I had been meaning to write a primer on false positives for ages, because it’s a topic I knew well from my risk institute days, but then a few other websites and magazines started writing about it, so I thought there was no point. (I am slightly kicking myself for not getting in earlier with this.) But there still seems to be a lot of confusion around about false positives, and I thought it may still help if I write a really clear, basic and fairly non-technical guide to them (as some of the explanations I’ve seen aren’t as clear as they could be, or cover the topic fairly quickly).

A ‘false positive’ test result is a test result that comes back positive when the patient isn’t really positive. (The concept of this is easy enough to grasp; this isn’t the difficult bit.) There are various ways this can happen, and how it can happen will vary depending on the type of test it is. For example, the test may sometimes mistake a bit of a different virus for the virus it is supposed to be looking for. (There’s a list of things that can cause a false positive, as well as a false negative, here.) But we don’t need to go into this issue here.

The next concept to grasp is that of the ‘false positive rate’. This is where the main misunderstandings arise. It’s natural to think this refers to the percentage of positives that are false. On this mistaken understanding, if you have, say, a false positive rate of 1%, and you have 100 positive results, then 99 of those are true positives, and only 1 out of the 100 is a false positive. That is is what Matt Hancock appeared to think the term meant when he was interviewed by Julia Hartley-Brewer on Talk Radio.

This isn’t what it refers to, though. It actually refers to the percentage of people who get tested who will be given a ‘false positive’ result. So if you have a false positive rate of 1%, and you test 100 people, you will get one false positive. That may not sound that different to the previous case, but it is, which you can see when you consider the number of false positives you will get when you test large numbers of people. Say you test 10,000 people. 1% of 10,000 is 100. So for every 10,000 tests you do, there will be 100 false positives.

This isn’t that much of an an issue when a disease is rampant and you have a large percentage of people testing positive when they really have got the disease (‘true positives’). For example, say you test 10,000 people, and 3,000 test positive. The fact that 100 of that 3000 are false positives won’t usually make much difference to policy in that scenario. 2900 or 3000, doesn’t really matter. There’s lots of people with the disease either way; the true positives swamp the false positives.

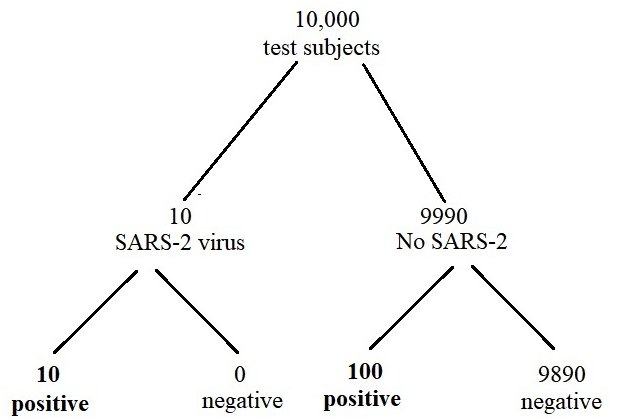

But when a disease is dying out, or is rare, then things are different. Suppose you test 10,000 people for X. Suppose X is rare at this point, and only 1 in 1000 people have it (ie. 0.1%, or to put it as a decimal fraction, 0.001). That means that we can expect to get 10 true positives from that 10,000. But if our false positive rate is 1%, then we will also get (as we saw above) 100 people getting false positives. That means out of our 10,000 tests, we get 110 positives, but only 10 of those are true positives. 100 out of the 110 positives – 91% – are false positives.

We can draw what’s called a ‘frequency tree’ to make this even easier to understand.

This is the sort of situation we currently face with SARS-CoV-2 (the virus that causes Covid). According to the ONS’s most recent estimate (18-24 September), only 1 in 500 people in the community (0.2%) is infected with SARS-CoV-2. So if we test 10,000 people we can expect that 20 will actually have it, and they will test positive (let’s assume there are no false negatives, in order to keep things simple). But then there are the false positives. The false positive rate for SARS-CoV-2 seems to be slightly less than 1% – that’s what Matt Hancock reported when he was interviewed recently by Julia Hartley-Brewer on Talk Radio. Let’s say it’s 0.9%. That means that if you test 10,000 people you will get 90 false positives.

That means you end up with 90 false positives plus 20 true positives, which added together gives you 110 positives. 90 out of 110 is 82%. 20 out of 110 is 18%. So less than one-fifth of the positives tests are real. So you can see that the issue of false positives is not just of academic interest. Most of the so-called ‘cases’ you’re currently reading about are actually just false positives.

This wouldn’t matter if we were being sensible about Covid, which is, after all, not a dangerous disease for most people, and one which presents no threat whatsoever to society. But of course we’re not being sensible about it. The UK is, along with many other countries, pursuing a virtual zero-SARS-2 strategy, and freaking out when the virus shows any signs of slightly increasing in prevalence (even though the prevalence is, according to the ONS, extremely low). That makes the false positive issue a serious one. Massively important decisions are being made by the likes of Matt Hancock on the basis of positive test numbers, despite the fact that he fundamentally misunderstands the false positive issue

Moreover, the false positive issue means that there will always be positive tests occurring even if in reality the virus has disappeared completely. And that means that we can never get out of this mess on the current rules.

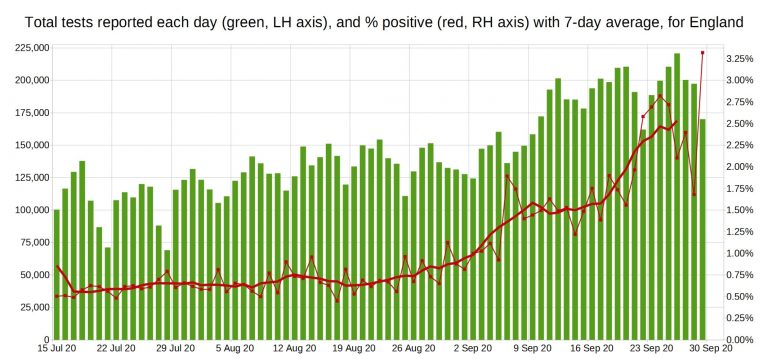

Take a look at this graph that Christopher Bowyer recently did of the rate of English tests that are positive.

From the left-hand side of the graph (which starts in mid-July) we can see that the percentage of positive tests is actually less than 1% all the way through to the start of September. Only in September does it rise above 1%, getting up to 2.5% by the end of September. In summer then, Covid barely existed. Most of the positive tests were false positives. Not all, though; the ONS’s seroprevalence surveys tell us that there was a small amount of it around in summer (about 1 in 2000 people, or 0.05%), and seeing as it started rising again in autumn it clearly hadn’t died out completely. But most were. Even now, the great majority of tests are false positives.

So that’s a basic introduction to this topic, which I hope makes it clearer for those who weren’t that sure about what it was all about.

Appendix A (if you’re keen on some of the complications):

I have made some simplifications here to make the basic concepts easier to grasp. One I should mention is that I have ignored the fact that in theory Pillar 1 tests (the ones for those in hospital) should have a higher rate of people who actually have the virus than the community prevalence, because you’d expect more people in hospital to have it than a random sample of people in the community, especially in spring when there were far more people with Covid in the hospitals.

With Pillar 2 (community testing) you should in theory also see the higher rate of Pillar 1 because in theory only people who are symptomatic are supposed to get tested. But as we know many asymptomatic people in the community got tested regardless, so in reality the number of Pillar 2 subjects with the disease will actually be more like the background prevalence.

On false positive rates, I should point out that for Pillar 1 tests the UK government was claiming that the false positive rate was 0.4%, not 0.9%. It’s Pillar 2 tests that are supposed to have a false positive rate of about 0.9% (some say 0.8%). I ignored this for simplification purposes, and it doesn’t change the general picture. It is probably correct that the false positive rate for Pillar 1 tests is more like 0.4%, because the positive rate for the tests all summer was around this.

Another issue is that we don’t really know for sure what the false positive (and negative) rates are for various tests. (And that’s not even mentioning issues like how many cycles should a PCR test be run for, or the fact that the vast majority of people with a positive test result, even a true one, are completely fine). If you’ve read this far you’ll appreciate that a lack of reasonable certainty on these issues puts a very large spanner in the works. If you have a Spectator subscription you can read what Prof Carl Heneghan has to say on this issue here, and Dr Clare Craig here.

Something else that might be useful for you to know: the true positive rate is sometimes called the ‘sensitivity’, and the true negative rate is sometimes called the ‘specificity’.

A good book that covers the false positive issue in general is Reckoning With Risk: Learning to Live With Uncertainty by Gerd Gigerenzer. (The issue of false positives is especially crucial to deciding when cancer screening makes sense.) The really amazing thing about Gigerenzer’s book, though, is that he and his team uncovered the fact that the great majority of doctors and public health officials and politicians didn’t have a clue about false positives and what it really means and how to calculate with it. Hancock is not alone in his ignorance (although given his position and the context his ignorance is inexcusable).

If you want more detail on the false positive issue specifically in regard to SARS-2, try Michael Yeardon’s article on Lockdown Sceptics.

Update: Please support this website, if you are able, by donating via KoFi, subscribing via SubscribeStar or Patreon, or buying my book (see right-hand sidebar for links). Free independent media like Hector Drummond Magazine, and my constantly updated Twitter and Parler accounts, cannot survive without the financial support of my readers.

38 thoughts on “A Beginner’s Guide to False Positives”

Could the false positive rate vary from batch to batch of tests?

I would not expect a politician to understand this for himself. I would expect the experts briefing him to din it into him.

Its a problem with how ministers are appointed. They are chosen because they are all around competent and politically useful, with no regard to their understanding of their brief. If, as often happens, they have not mastered their brief prior to taking office they swiftly get captured by the Civil Service, and become mouthpieces.

I’m going to ask for a little forebearance here – I’m a simple soul. This is very much tongue-in-cheek. I think.

It’s been apparent for some time now that the PCR test is not diagnosing COVID and is simply finding the (artificially amplified) numbers of RNA fragments probably resulting from other coronavirus infections in the past, or indeed from COVID infection more recently.

Therefore all coronaviruses have much of their RNA “genome” in common.

I am human (despite my wife’s occasional doubts). I know – because much better minds than mine have demonstrated it – that I share >98% of my genome with chimpanzees. I also know (same reason) that DNA and RNA are not the same thing but I propose to let that detail pass.

So if in a DNA test a fragment of my genome is found to match that of a fragment of DNA on the chimpanzee’s equivalent of the same gene, can the logic of PCR tests be applied to prove that the chimpanzee is testing as a false positive for being human? Because if it is so, there are questions we need to answer about Matt Hancock….. And heaven help us if it’s lizard DNA……. someone find find Icke, quick 🙂

Forgive me if I’m embarrassing myself here without knowing it…….. but I’m a little puzzled as to whether this is a reasonable analogy. And if not, why not?

Just a question on this. Very well explained and understandable, but one thing that puzzles me is somewhere like New Zealand. They are testing around 4000 people per day and yet they only record zero or maybe 1 or 2 or 3 cases a day. How do they avoid the false positives ? Is it because any positive is re-tested ?

“And that’s not even mentioning issues like how many cycles should a PCR test be run for”

Which is the single most important issue we are facing. Has any minister (or government scientist) ever publicly discussed this, let alone admitted how many cycles the labs are running, and even if they are all comparable?

The Usual Suspects have messed up the language, as George Orwell observed decades ago. Properly, a “case” is a person with signs & symptoms of an infection. Then a diagnostic test confirms that the infection is due to a specific virus or bacteria — because many different infections can cause similar signs & symptoms. A healthy person with a positive SARS test is not a “case” — not yet, and maybe never.

False Positives — anyone old enough to remember when car alarms were first introduced knows all about False Positives. Countless people were wakened up in the night when a gust of wind hit a neighbor’s car. The end result was that everyone ignored car alarms, assuming (usually correctly) that the noise was no more than an annoying nuisance rather than a theft in progress. Unfortunately, the inappropriate governmental reactions to Covid testing False Positives are causing bankrupt businesses, unemployed human beings, medical & psychological distress, and impending economic disaster — not just disturbed nights’ sleep.

Pat: “Could the false positive rate vary from batch to batch of tests?”

That is a highly perceptive question. Especially in an environment where the rate of testing has been increasing exponentially. That necessarily means that existing testing labs are running harder, tired lab techs are working overtime, new people are being rapidly trained, equipment is being brought out of storage or repurposed, time for calibration of equipment and cleaning of laboratories is being squeezed.

Under those real world circumstances, it would be reasonable to expect that the rate of False Positives would be increasing.

Pillar 2 (community) positives were running at around 4 times the rate of Pillar 1 (hospitals) in August before the publication of the separate stats was stopped.

Also, national figures are not much help in seeing what is happening at a sub-national level. Do all the testing labs have the same % positive results? How many positives are asymptomatic in each area? Are there any unexplained outliers in the data?

Given that all the COVID restrictions, and the economic damage, are being driven by rising “case” numbers you’d think someone in government would be looking at how accurate the PCR tests actually are.

This is not just an interesting academic debate about false positives, it has real world consequences. Why are the newspapers and TV not asking these questions?

If you Don’t have a Spectator subscription you can read what Prof Carl Heneghan has to say on this issue here, and Dr Clare Craig here

I do not believe the Gov’ts claim of <1% false positive. RT-PCR tests are 1% – 4% FP in lab conditions

Another simple explain

https://www.conservativewoman.co.uk/false-positives-and-the-never-ending-epidemic/

The Raab & Hancock Show: Coronavirus False Positives

https://www.youtube.com/watch?v=dnQtXPH2fr8

These clowns are running, no ruining, UK

@Hector

Thanks for this. Follow up on why RT-PCR tests should not be used for diagnosis, and inventor furious it was being misused, would be helpful

Hector, do we know what the false negative rate is? We need to take that into account in understanding the prevalence of the virus.

>I do not believe the Gov’ts claim of <1% false positive. RT-PCR tests are 1% – 4% FP in lab conditions

The false positive rate can't be any higher than the lowest recorded positive rate, which is around 0.4%. I don't know about the 1-4% figure but bear in mind that the the labs use techniques to bring the false positive rate down.

>Could the false positive rate vary from batch to batch of tests?

It can certainly can (and does) vary from lab to lab. It could also vary from batch to batch, eg. one batch gets contaminated. But I don’t know how serious an issue this is in reality.

As for the false negative rate, apparently this is very hard to estimate and could be way out.

https://www.wsj.com/articles/questions-about-accuracy-of-coronavirus-tests-sow-worry-11585836001

Malcolm Kendrick reports a systematic review that says the false negative rate is between between 2% and 29%.

https://drmalcolmkendrick.org/2020/09/28/false-positive-tests/

I’ve no problem with this general analysis in terms of probabilities..

But (big but) the issue with PCR testing is the long chain of events, each of which can entail error.

In the end, the crucial issue of what constitutes a ‘real’ positive (linked to symptomatic illness) is unknown, with the number of cycles of amplification being an uncontrolled variable, as is the question of what RNA was amplified in the first place.

Against this background of uncalibrated error sources, the detail of probabilities lies in the same disaster area as deckchairs on the Titanic.

My query is the same as John Church’s. Here in South Australia we are testing about 2000 to 3000 people a day but are recording no positives. How is this possible if there is a 1% false positive rate?

I suspect in Australia and NZ they are being very careful with their tests, doing lots of re-testing and cross-testing maybe not running too many cycles. This is just a hunch, though. Remember that the government in NZ is desperate to avoid any positives, because they want to say that their strategy has worked and they’ve achieved zero Covid, whereas in the UK the government’s intention is clearly to ramp up the fear by showing that Covid is still around.

One thing I’m not clear on is whether the tests can mistake other viruses (such as the common cold) for CV19. I’ve heard it said that they can, but I’m not sure if that’s true. It would be good for some authoritative information on that subject.

Officially they don’t. I’ve seen this made clear in government announcements, and someone sent me an an e-mail they got from the government confirming this after they enquired. The tests are for specific bits of SARS-CoV-2. However, that doesn’t mean it can’t occasionally happen that a different virus with a similar bit of RNA triggers a positive. But generally the common cold doesn’t result in a positive result (and if it it did we’d be seeing large numbers of positive tests, because lots of school kids currently have colds).

Hi, as usual great info on the site

Just a couple of points. A recent lancet article also said false positive is up to 4%

https://www.thelancet.com/journals/lanres/article/PIIS2213-2600(20)30453-7/fulltext

Also, I’m not sure the test is for a specific sars cov2 virus. There was a doctor explaining this to a government inquiry panel in Germany.

I’ll try to ffind the link but she said they use a generic Coronavirus sequence from a Database as they had no specific “covid19” sample!!

Hence the test results pick up any Coronavirus.

Thanks

This is the enquire in Germany video. First few minutes is a bit dry. I almost gave up, but then one of the panel asked the doctor to start again “in English” as she was too technical.

From then on she described how the test is done using a generic Coronavirus. The panel was shocked.

https://vimeo.com/443416775

Who’s the doctor? Is she involved with the tests, or just offering an opinion?

Sorry don’t know the full cast list.

This is the blurb on the video link

“In Germany, a group of lawyers have formed the independent Corona Committee for the purpose of gathering factual evidence about the pandemic in order to legally challenge the authorities as to the suitability of the continuing measures. Details in english about the Corona Committee can be found here: en.corona-ausschuss.de

The 4th evidence gathering session took place on 24.07.2020 and was streamed on Oval Media’s YouTube channel. Link here: youtu.be/pKllldIiMpI

This video is an edited abstract of the live stream coverage, focussing only on the part of the evidence concerning the controversial use of PCR tests in an effort to measure numbers of new infections.

To make this abstract accessible to english language speakers, english sub-titles have been added.

The ‘Implications’ slide at the end of this video in yellow text is my own summary of the evidence and does not come from the Corona Committe. The Committee will present its overall findings at a later date to be announced.”

The dr is a virologist and is introduced in the first minute.

She specifically says they take a primer “similar” to the virus and as there are lots of coronaviruses it’s easy to find something similar.

@Hector

I concur with @Steve I’ve read many papers quoting the RT-PCR test as 1% – 4% FP. Many videos too

On corona common cold, the test detects viral fragments and can give FP for cold. Also, at least one human DNA fragment is identical to a C-19 fragment

Today

Please investigate RT-PCR test misuse and fallibility. I’ve posted many links in past. With SNPland in lockdown again from 6PM on Friday we need the test exposed as a failure

Except PCR isn’t a test: “With PCR you can find almost anything in anybody”, that’s Kary B Mullis, Nobel Laureate for Chemistry and inventor of PCR which isn’t supposed to be a *test*, and certainly not diagnostic. “PCR test” is a deliberate corruption of the PCR amplification technology by German virologist Christian Drosten in collusion with WHO for which criminal charges are being brought – see link below.

Not only did Mullis invent PCR but he was a climate ‘denier’ who said Aids was a “sham”. Ought to be a godsend to a ‘sceptic’ journalist. But search for ‘Kary Mullis’ on Lockdown Sceptics: zilch. There are a few mentions of PCR as mentioned above by PCar but which barely scratch the surface, even though the current restrictions are predicated *entirely* on PCR “tests” – took less than half an hour online to discover it’s a complete crock.

‘Lockdown Sceptics’ is no different to every other media ‘sceptic’ source where the premisss is ‘lockdown’ as ‘mistake’. In spite of all the evidence, hidden in plain sight, e.g. UN Agenda 21, WEF etc, never mind moving the goal posts: “flattening the curve” (March); “Saving Lives” (June); “cases” (September), we’re supposed to believe it’s not engineered to demoralise the populace and decimate small business.

Wikipedia do a typical hatchet job on Mullis:

**Mullis expressed disagreement with the scientific evidence supporting climate change and ozone depletion, the evidence that HIV causes AIDS, and asserted his belief in astrology**

The most forensic indictment of the almighty fraud of Covid, PCR especially, I know of comes in a 49 minute talk from a German lawyer: ‘Crimes against Humanity’. I’d reckon no more than 10% of what he says has been covered by any media journalist, and that goes for the more thoughtful ones like Peter Hitchens as much as the more transparent phonies like Toby Young, Jame Delingpole et al.

His background is corporate fraud including: “Deutsche Bank, formerly one of the most respected banks, today one of the most toxic criminal organisations in the world.” Again the fraud of the City / banksters is in public domain just unreported by plutocrat media – how could it be otherwise? Brothel-keepers don’t chastise fornicators.

Either this man isn’t telling the truth or the fearmongering is preordained and deliberately orchestrated, and media ‘sceptics’ are lying at least by omission, i.e. business as usual, especially ‘independent’ ouftits like UnHerd or IEA. The one site I know of to consistently publish the truth with well-referenced sources is Off-Guardian.

A perfect antidote to all the media bullshitters. It’s quite long as he goes into the background, not least of PCR and WHO collusion in the “test”. The meat of the PCR allegations is at 22:40. He actually quotes from official sources to support his case on the worthlessness of PCR as a diagnostic tool:

‘Crimes against humanity’

https://www.youtube.com/watch?v=kr04gHbP5MQ&t=1160s

>Please investigate RT-PCR test misuse and fallibility. I’ve posted many links in past.

You clearly don’t read my Twitter!

Hector: “Malcolm Kendrick reports a systematic review that says the false negative rate is between between 2% and 29%.”

If the false negative rate is higher than the false positive rate then surely that means actual infections are higher than reported?

@Sean, Hector

I’ve seen many pieces on PCR test fallibility on Lockdown Sceptics, but as you say both there and elsewhere inventor Kary Mullis furious it was being misused rarely or never mentioned. He was campaigning against it’s use as diagnostic test since before it was used to “Prove” someone had HIV

Many PCR pieces at Lockdown Sceptics are in the Daily News “Round-Up” section

Another article today

Matt Hancock’s fantasy guide to Covid false positives, PCR 7% rate, Covid-19, Sky News Kay Burley

https://www.youtube.com/watch?v=-5DN1lds2f4

Hancock repeatedly ignores question and tries to rabbit hole. Well done Ms Burley for refusing to follow him down rabbit hole

@Hector

Twitter? Correct, too time consuming for info gleaned

HOCL fogging: Fast, safe, efficient- not used by NHS, GPs. Why?

Flu Vaccination: In SNPland Sturgeon has told GPs Not to provide it

>This is the enquire in Germany video. First few minutes is a bit dry. I almost gave up, but then one of the panel asked the doctor to start again “in English” as she was too technical.

>From then on she described how the test is done using a generic Coronavirus. The panel was shocked.

>https://vimeo.com/443416775

Steve, is this the right link? It was very interesting, but there was nothing in English.

>From then on she described how the test is done using a generic Coronavirus.

From reading up on some people involved in this very field this does not seem to be true, they are using specific segments from the SARS-CoV-2 genome.

@Pcar

Lockdown sceptics, like Free Speech Union is a classic front or psyop – psychological operation – straight out of the communist playbook: to shift public opinion in a pre-determined direction.

In the case of Lockdown sceptics it’s to centre the debate around ‘lockdown’ as an excessive response to the virus, deflecting attention from the globalist / communist agenda, and the vested interests behind preventive measures. Compare and contrast with Off-Guardian.

Similarly Free Speech Union redefines the parameters of permissible speech. No better example of how this works than our exchange on here recently.

I cited examples of men imprisoned for incorrect “racist” speech ignored by FSU. You replied that FSU had successfully won a case where a man had lost his job for refusing to support BLM and was reinstated. See how it works?

Meanwhile Jake Hepple was sacked from his job after he sponsored a white lives matter banner as players of his local team bent the knee for black lives matter. Not only was Hepple slaughtered in the media not least the Daily Telegraph, and sacked from his job, but the Sun and Daily Mail went for his girlfriend and got her sacked, too.

Kin punishment has been a characteristic of communist regimes in the last century e.g. Russia, China, North Korea. Not a squeak from Free Speech Union on behalf of either Hepple or his girlfriend.

People say oh how can Toby Young be communist? Call him something else. I’m merely describing the facts.

The claims made by German lawyer Dr Reiner Fuellmich re PCR aren’t that it’s ‘inaccurate’ or ‘fallible’ but fraudulent and criminal. Either he’s not telling the truth or Lockdown Sceptics et al are lying by omission.

No different in principle to FSU. We were supposed to be grateful that Jake Hepple didn’t get his collar felt by the police for his banner. FSU is complicit in what it ostensibly opposes: political persecution purely for speech. And it’s the same with PCR tests on which the entire Covid fraud is premissed.

Because if PCR tests *are* a fraud, i.e. the virus can’t be tested for then there *is* no virus, in that its presence can’t be proven in any particular case. That’s why they’re talking about the ‘fallibility’ of the test.

It’s akin to ‘hide the decline’ in climate. If the climate’s *not* getting warmer global warming’s a crock. Thus mutation to ‘climate change’. Similarly “flatten the curve” evolved into “saving lives”. PCR is not fallible – it’s not a test.

If the presence of the virus can’t be established, that immediately scuppers the notion of a vaccine, ‘test and trace’ or indeed *any* preventive measures.

The claims by the lawyer, all referenced, totally undermine PCR and by extension Covid. But 90% of what he says hasn’t even been mentioned on Lockdown Sceptics.

If Lockdown Sceptics were concerned for truth they’d have exposed the facts about PCR long ago, not least its inventor’s insistence that it had no diagnostic value. As I said earlier, I searched Lockdown Sceptics for ‘Kary Mullis’ : 0.

This is from a long Tweet thread by Dr Michael Yeadon one of the scientists cited by the lawyer:

**PCR swab test is in my view criminal. It’s not a mistake. They know exactly what the effect of ever increasing ‘cases’ are each day: its to keep you frightened & to render you receptive to vaccination which most of us definitely do not need.**

Notable that Yeadon is retired just as so many ‘climate’ whistle blowers were emeritus.

This is from an article I bookmarked 3 months ago: ‘No One is Dying from Corona Virus’ quoting Dr Stoian Alexov, President of the Bulgarian Pathology Association:

“What all of the pathologists said is that there’s no one who has died from the coronavirus. I will repeat that: no one has died from the coronavirus.”

[With the flu] we can find one virus which can cause a young person to die with no other illness present […] In other words, the coronavirus infection is an infection that does not lead to death. And the flu can lead to death.”

His point is that there is no proof that anyone died from the virus because they can’t isolate it. This isn’t even to do with PCR as such but with identification. Again if the virus can’t be identified how can it be tested for?

That’s from Off-Guardian whose content reflects the claims of the German lawyer, that it’s a fraud in the service of vested interests, in contrast to Lockdown Sceptics which diverts notice away from the vested interests. Either they are lying or Lockdown Sceptics like the rest of the “lying media” (German lawyer) is a sham.

By the way, I’m not suggesting that Toby Young or Sun or Daily Mail editors or journalists are communist in any ideological sense merely in their deeds. The same is no doubt true of the North Koreans and Russians who engaged in the same behaviour but whom we have no problem calling ‘communist’. if it walks like a duck etc. The best term for TY is probably ‘grifter’.

https://off-guardian.org/2020/07/02/no-one-has-died-from-the-coronavirus-president-of-the-bulgarian-pathology-association/#comment-203305

@Sean

Yes, there is lot of doing this, but not that. However, nobody can do all.

FSU for now is concentrating on Ofcom censorship of anti-Gov’t/WHO Covid-19 reports, speakers

LS on, as name implies, Lockdowns, closures etc based on fiddled inaccurate, unscientific data so that people can see the “Consensus” is False

HD and LR on real world evidence of Gov’t & MSM lying and scare mongering making healthy public suffer

imo all doing a good job on limited budgets and constant threat of being shut down

The Freespeech rallies are shutdown by arrest of speakers. However, they’re usually released in 22-23 hours without charge as Gov’t fear Judge & Jury defeat

The real punishment is police seizing all (hired or personal) PA Equipment and IT equipment as evidence – no equipment, no rally

There is no point repeatedly raising same side issues, it distracts and diverts nuanced discussion of main issue. Please stop, do it on Arrse instead

Let’s call a spade a spade: an outfit campaigning for ‘free speech’ which limits its advocacy to politically convenient causes is a lie, a grift, a sham, operating under false pretences.

Lockdown Sceptics is more deception than outright lie, because the restrictions can’t be properly understood independently of the interests driving them.

That’s why Off-Guardian has more truth value than LS. I don’t generally share the political outlook of its contributors, but truth is truth regardless of source. Jeremy Corbyn’s politics turn my stomach but he tells truth about City of London racket even if it’s in the service of what for me is a loathsome cause.

Political truth about Covid is coming more from left wing radical Piers Corbyn types who are excluded from the media. ‘Sceptic’ or ‘right wing’ nomenklatura grifters like Toby Y or James D are bound to deceit as a condition of *not* being excluded.

No one with a public profile can defend ‘free speech’ anymore than he can be open about globalist agenda, because to be denounced as “racist” or “conspiracy theorist” is to be ‘cancelled’ from public life / media altogether.

They merit those labels by default anyway in virtue of being white / ‘sceptic’. Which in turn serve to define the bounds of political discourse censoring patriots and globalist agenda from public realm. They are ‘gatekeepers’ who can’t but be complicit in what they otherwise oppose.

Sean. When you say the restrictions can’t be properly understood independently of the interests driving them you do of course mean the interests you believe are driving them. But the policy of Lockdown Sceptics is to oppose the restrictions on the basis of factual evidence regardless of what anyone may or may not consider to be the motives of those imposing them. So there is no deception and while you are clearly genuine in your beliefs I think you need to recognise that many who oppose the restrictions would be put off supporting LS if were to limit its appeal to those who think as you do.

Whilst I respect his right to free speech and his opinion (!), I feel Sean Lydon does over-analyse things, and I do agree with David Norman.

My take is more basic-Lockdown Sceptics may not be perfect, but anyone who opposes this ridiculous nonsense, in whatever manner possible, is alright by me. I don’t care what Mr. Young’s motives are, he may want to take over the world for all I know, but his site is giving some hope to those of us who despair of “our” government and its insane policies.

I fully support LS.

Comments are closed.